In this blog post, we briefly cover how to prepare a server to host a high-performance GoEthereum full node, like one needed for Binance Smart Chain, Polygon, Avalanche or Harmony One. The audience of this guide are developers who need to do a high volume, millions to billions, of JSON-RPC API requests and they greatly benefit from the low latencies and network bandwidth of running GoEthereum locally.

Running your own node is a much more efficient approach than using any of free or commercial Ethereum JSON-RPC API service providers for your blockchain data read needs.

Getting a server

You cannot run the world financial system on Amazon EC2 instance or Raspberry Pi. The disk IO is too slow.

Demanding blockchain full nodes, also knows as blockchain clients, need a server with a fast NVMe SSD disk and plenty of disk space. Your best bet for affordable servers is Hetzner.

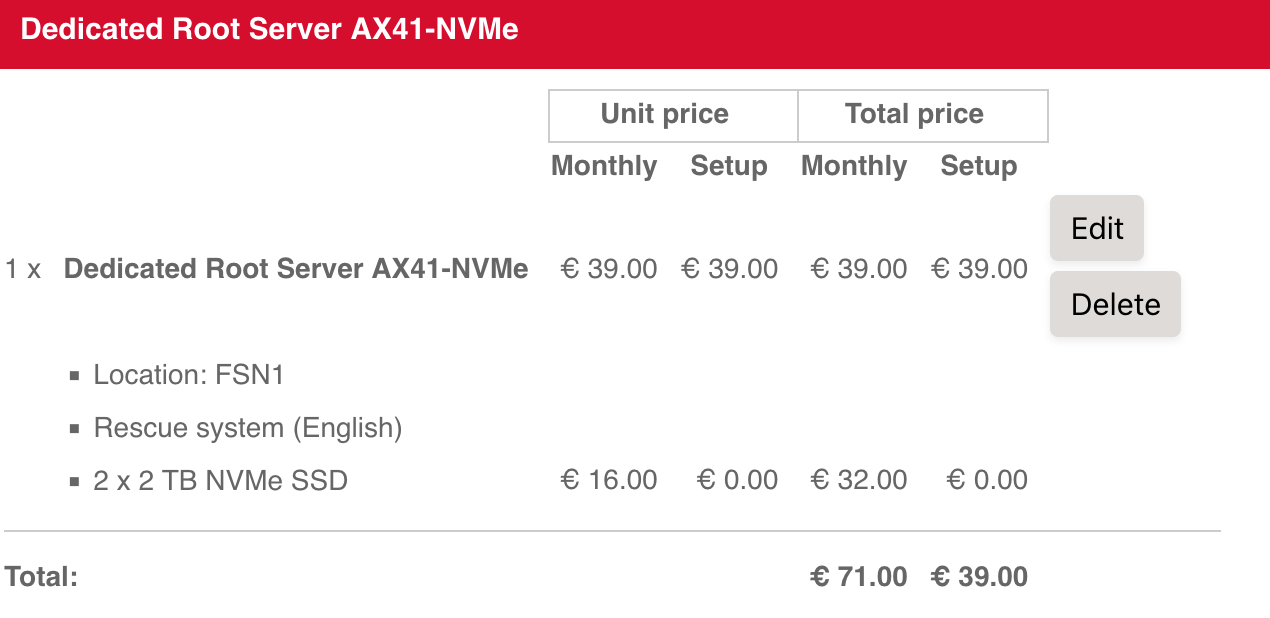

Here is a sample server that is with added 2 * 2 TB SSD disks.

Setting up the server and partitioning the disk

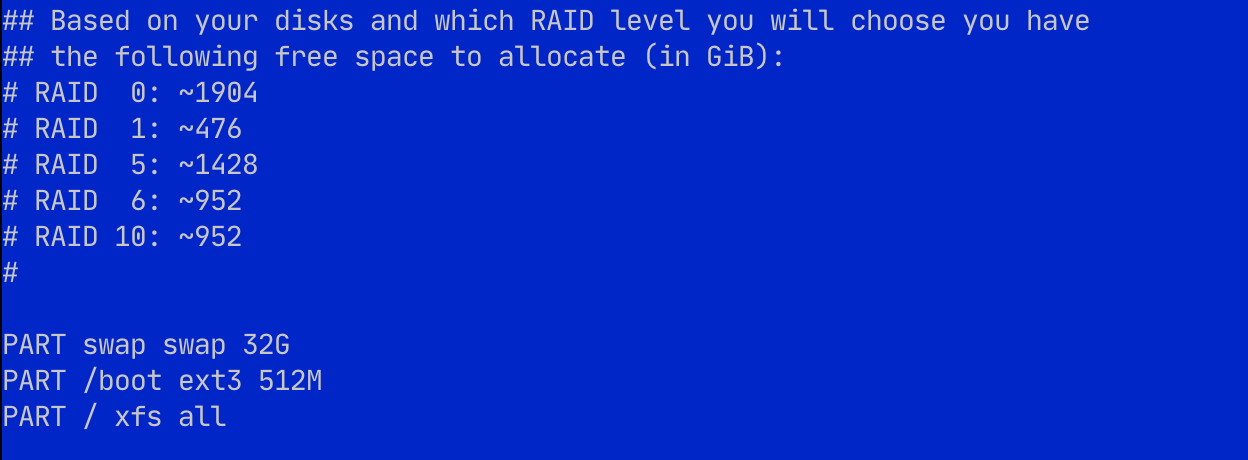

All information on the full node is discardable - it is public data after all. Thus, to maximize the disk space utilisation we set 2 x 2 TB disks in RAID0, which gives us a total of 4 TB available disk space with doubled read and write speed.

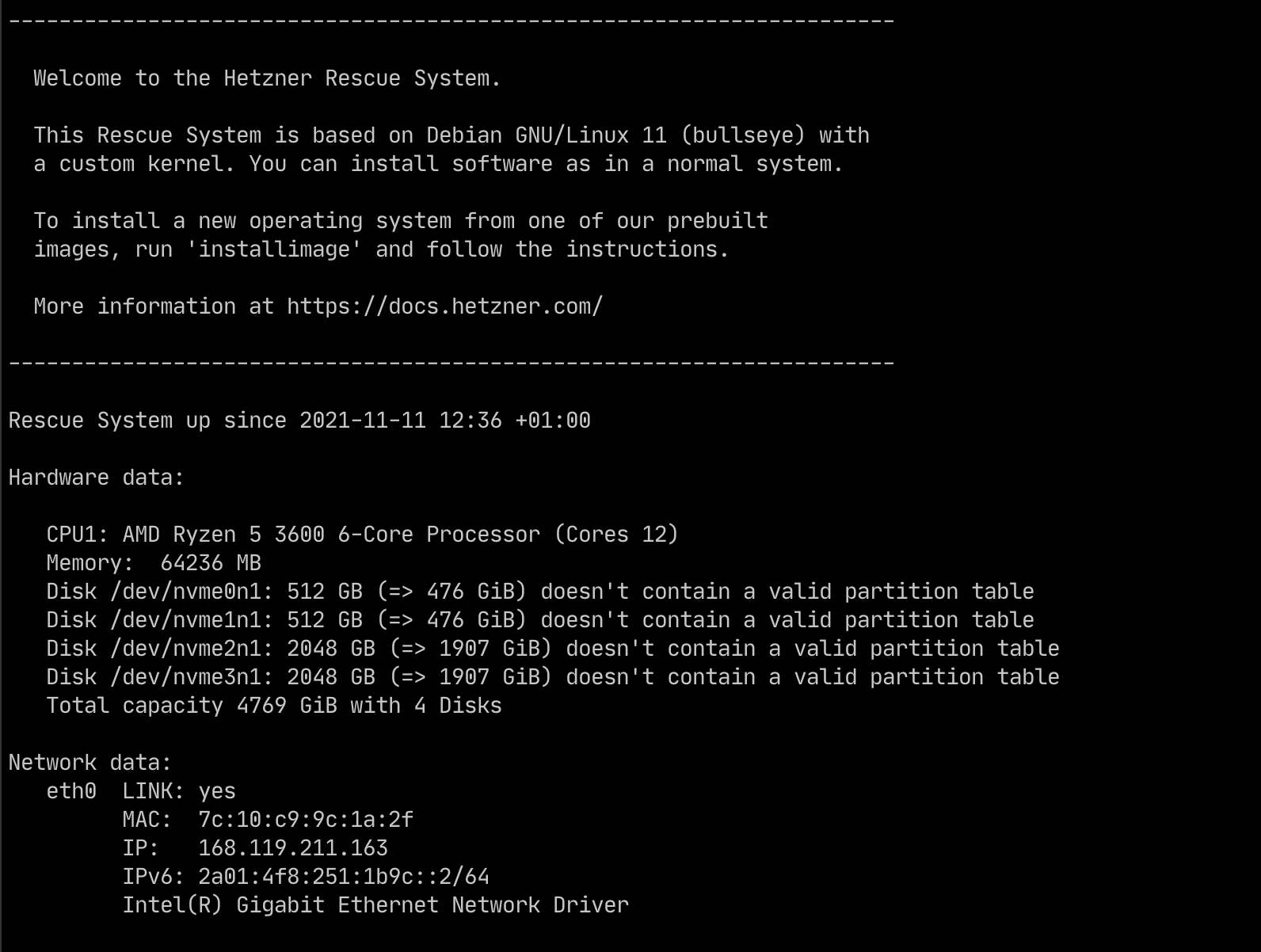

For Hetzner, they offer a rescue tool that allows you to reinstall the operating system and partition the disk. For this step, advanced Linux administration skills are recommended.

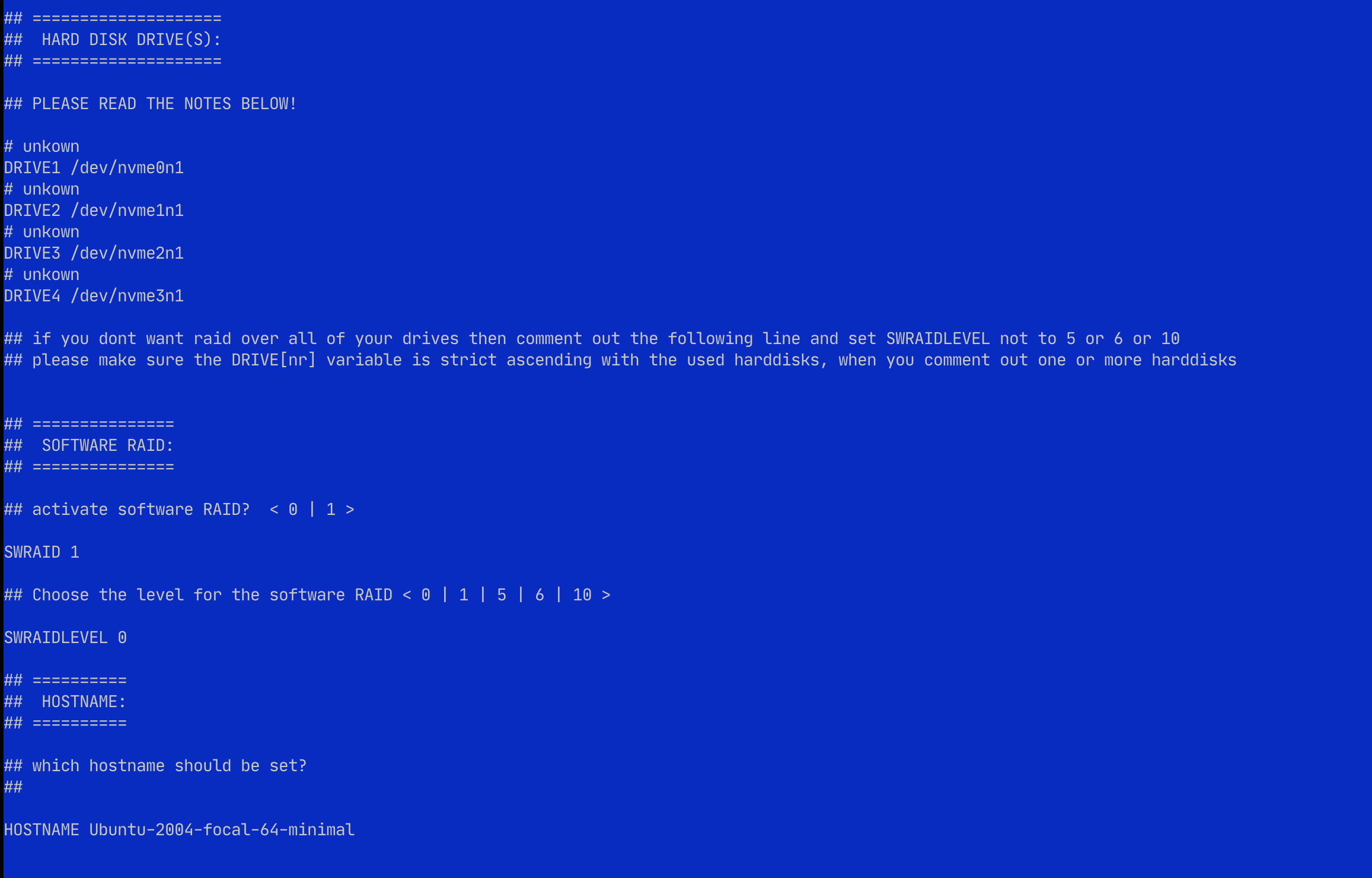

We set up the operating system using Hetzner's installimage.

The Hetzner server comes with 1x512 GB root disks and our additional 2X2TB disk. installimage cannot handle any advanced RAID setup, so we do it later on the Linux root command prompt after booting the server. Here we just declare the root and boot partitions.

We also choose xfs over ext4, as the former tend to work better with large volume.

Setting up the large RAID0 partition for the GoEthereum full node

Our server boots up with Ubuntu 20.04 installed and we can SSH in for the first time. We follow the RAID set up instructions from kernel.org.

First let's see how Hetzner installimage partitioned our disks.

fdisk --list

Disk /dev/nvme0n1: 476.96 GiB, 512110190592 bytes, 1000215216 sectors

Disk model: WDC PC SN720 SDAPNTW-512G

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disklabel type: dos

Disk identifier: 0xfbb3d767

Device Boot Start End Sectors Size Id Type

/dev/nvme0n1p1 2048 33556479 33554432 16G fd Linux raid autodetect

/dev/nvme0n1p2 33556480 34605055 1048576 512M fd Linux raid autodetect

/dev/nvme0n1p3 34605056 1000213167 965608112 460.4G fd Linux raid autodetect

Disk /dev/nvme1n1: 476.96 GiB, 512110190592 bytes, 1000215216 sectors

Disk model: SAMSUNG MZVL2512HCJQ-00B00

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disklabel type: dos

Disk identifier: 0x4bc0643a

Device Boot Start End Sectors Size Id Type

/dev/nvme1n1p1 2048 33556479 33554432 16G fd Linux raid autodetect

/dev/nvme1n1p2 33556480 34605055 1048576 512M fd Linux raid autodetect

/dev/nvme1n1p3 34605056 1000213167 965608112 460.4G fd Linux raid autodetect

Disk /dev/nvme2n1: 1.88 TiB, 2048408248320 bytes, 4000797360 sectors

Disk model: SAMSUNG MZVL22T0HBLB-00B00

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk /dev/md2: 920.64 GiB, 988511469568 bytes, 1930686464 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 524288 bytes / 1048576 bytes

Disk /dev/md1: 511 MiB, 535822336 bytes, 1046528 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk /dev/nvme3n1: 1.88 TiB, 2048408248320 bytes, 4000797360 sectors

Disk model: SAMSUNG MZVL22T0HBLB-00B00

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disk /dev/md0: 31.99 GiB, 34324086784 bytes, 67039232 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 524288 bytes / 1048576 bytes

lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

nvme0n1 259:0 0 477G 0 disk

├─nvme0n1p1 259:1 0 16G 0 part

│ └─md0 9:0 0 32G 0 raid0 [SWAP]

├─nvme0n1p2 259:2 0 512M 0 part

│ └─md1 9:1 0 511M 0 raid1 /boot

└─nvme0n1p3 259:3 0 460.4G 0 part

└─md2 9:2 0 920.6G 0 raid0 /

nvme1n1 259:4 0 477G 0 disk

├─nvme1n1p1 259:6 0 16G 0 part

│ └─md0 9:0 0 32G 0 raid0 [SWAP]

├─nvme1n1p2 259:7 0 512M 0 part

│ └─md1 9:1 0 511M 0 raid1 /boot

└─nvme1n1p3 259:8 0 460.4G 0 part

└─md2 9:2 0 920.6G 0 raid0 /

nvme2n1 259:5 0 1.9T 0 disk

nvme3n1 259:9 0 1.9T 0 disk

Disks nvme2n1 and nvme3n1 are untouched, so we are going to create a RAID0 group /dev/md3 out of them.

mdadm --create --verbose /dev/md3 --level=stripe --raid-devices=2 /dev/nvme2n1 /dev/nvme3n1

You get

mdadm: chunk size defaults to 512K

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md3 started.

Formatting the partition for GoEthereum

Then we format this device for XFS and set up the mount point

mkdir /polygon

mkfs -t xfs -f /dev/md3

Test mounting the new drive

mount /dev/md3 /polygon

cd /polygon

df -h .

Filesystem Size Used Avail Use% Mounted on

/dev/md3 3.8T 27G 3.7T 1% /polygon

We have 3.8T disk space available on our EUR 71.00/month server.

Now as the final touch let's add the /dev/md3 partition to fstab so that it survives over the boot.

Get the UUIDs of the disks with blkid

blkid

/dev/nvme0n1p1: UUID="0cce9579-0f8c-21e2-75c5-67f762482229" UUID_SUB="bbb8dee8-3b68-c2ef-e074-e2ced4433425" LABEL="rescue:0" TYPE="linux_raid_member" PARTUUID="fbb3d767-01"

/dev/nvme0n1p2: UUID="ae741f7c-00ca-fcb8-a0bb-749329fc0858" UUID_SUB="d0f74179-31dc-83e8-2016-65dd4650c82a" LABEL="rescue:1" TYPE="linux_raid_member" PARTUUID="fbb3d767-02"

/dev/nvme0n1p3: UUID="a98136a5-4d73-8f2d-117c-c6fba1fb222d" UUID_SUB="a516d587-23fe-3144-6d9b-4799e6144fb4" LABEL="rescue:2" TYPE="linux_raid_member" PARTUUID="fbb3d767-03"

/dev/nvme1n1p1: UUID="0cce9579-0f8c-21e2-75c5-67f762482229" UUID_SUB="cf16d91f-f838-0b52-cb48-241c73dd4741" LABEL="rescue:0" TYPE="linux_raid_member" PARTUUID="4bc0643a-01"

/dev/nvme1n1p2: UUID="ae741f7c-00ca-fcb8-a0bb-749329fc0858" UUID_SUB="4a4c63f5-2df8-2bc0-b755-6854d0731794" LABEL="rescue:1" TYPE="linux_raid_member" PARTUUID="4bc0643a-02"

/dev/nvme1n1p3: UUID="a98136a5-4d73-8f2d-117c-c6fba1fb222d" UUID_SUB="01c634d4-4ae7-ea19-ca63-e38ff32e4e96" LABEL="rescue:2" TYPE="linux_raid_member" PARTUUID="4bc0643a-03"

/dev/md2: UUID="30232920-f2ef-402d-ad64-32cfed2f06ca" TYPE="xfs"

/dev/md1: UUID="b3325d6d-1ce0-46d5-ba9c-4a17c4df99d6" TYPE="ext3"

/dev/md0: UUID="56ea3570-dbda-4f20-8eb8-30ea7da75e39" TYPE="swap"

/dev/nvme2n1: UUID="e27c7163-325d-13b1-227b-33fd1ce23f74" UUID_SUB="e01a5942-6016-43a0-13ea-29a5c177fa5b" LABEL="Ubuntu-2004-focal-64-minimal:3" TYPE="linux_raid_member"

/dev/nvme3n1: UUID="e27c7163-325d-13b1-227b-33fd1ce23f74" UUID_SUB="abfa183d-24cf-b3c7-a863-6ae8d92d2623" LABEL="Ubuntu-2004-focal-64-minimal:3" TYPE="linux_raid_member"

/dev/md3: UUID="09762c81-c367-4cd6-80d9-ef34e0e7dc62" TYPE="xfs"

Add an entry to /etc/fstab for /dev/md3

proc /proc proc defaults 0 0

# /dev/md/0

UUID=56ea3570-dbda-4f20-8eb8-30ea7da75e39 none swap sw 0 0

# /dev/md/1

UUID=b3325d6d-1ce0-46d5-ba9c-4a17c4df99d6 /boot ext3 defaults 0 0

# /dev/md/2

UUID=30232920-f2ef-402d-ad64-32cfed2f06ca / xfs defaults 0 0

UUID=9762c81-c367-4cd6-80d9-ef34e0e7dc62 /polygon xfs defaults 0

Final test

Reboot the server and see the partition mounts correctly after the boot.

reboot now

SSH in again and check the partition is still there:

df -h /polygon

Filesystem Size Used Avail Use% Mounted on

/dev/md127 3.8T 27G 3.7T 1% /polygon

Now you can proceed to have GoEthereum geth to use /polygon as its --datadir option.

If you get /dev/md127 issue after the reboot make sure your UUIDs in /etc/fstab and blkid command match.

Trading Strategy is an algorithmic trading protocol for decentralised markets, enabling automated trading on decentralised exchanges (DEXs). Learn more about algorithmic trading here.

Join our community of traders and developers on Discord.